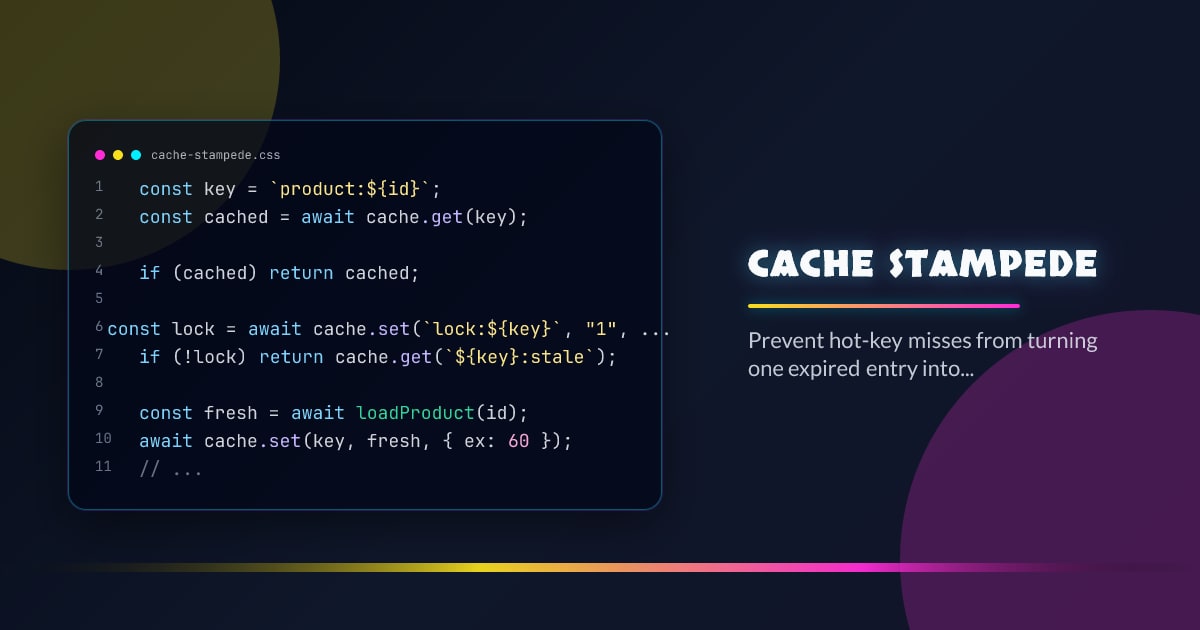

Cache Stampede

A cache stampede happens when many workers miss the same hot key and all recompute it at once. The cache was supposed to flatten load, but expiration aligned your traffic into a spike. The fix is usually not one trick. You combine single-flight, stale reads, jittered TTLs, and bounded rebuild work.

Minimal Example

const key = `product:${id}`;

const cached = await cache.get(key);

if (cached) return cached;

const lock = await cache.set(`lock:${key}`, "1", { nx: true, ex: 5 });

if (!lock) return cache.get(`${key}:stale`);

const fresh = await loadProduct(id);

await cache.set(key, fresh, { ex: 60 });

await cache.del(`lock:${key}`);

return fresh;What It Solves

- Prevents synchronized expiration from multiplying backend work under peak traffic.

- Lets one worker rebuild a value while everyone else serves stale or waits briefly.

- Keeps latency spikes localized instead of letting them cascade into retries and saturation.

Failure Modes

- Setting the same TTL on every key so rebuilds align on the minute.

- Locking without a stale fallback and turning misses into user-visible latency cliffs.

- Recomputing an expensive object without any concurrency budget or timeout.

Production Checklist

- Add TTL jitter to hot keys so expirations spread naturally.

- Use single-flight or per-key locking with short expirations and stale fallback.

- Monitor rebuild rate and origin load separately from cache hit ratio.

Closing

Caches fail in crowds, not in diagrams. Design rebuild paths for contention first, and the happy path will usually take care of itself.