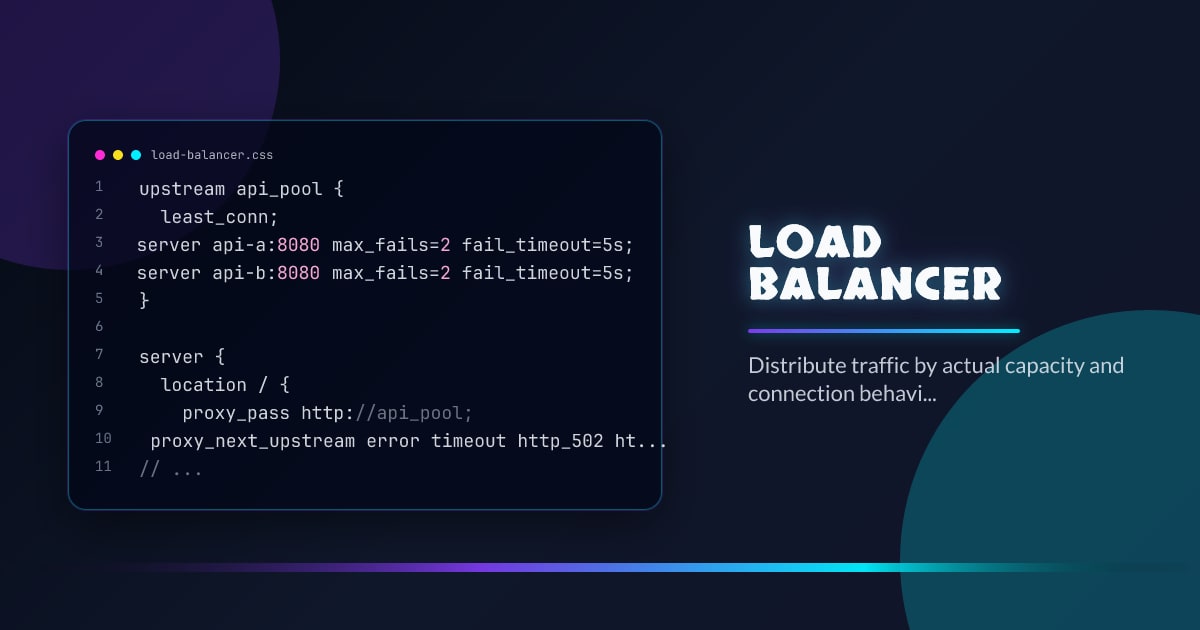

Load Balancer

A load balancer decides which instance receives the next request, but the real job is broader: isolate failures, stop sending traffic to unhealthy nodes, and absorb uneven capacity across zones and versions. The algorithm matters less than the operational model around health checks, draining, timeouts, and long-lived connections.

Minimal Example

upstream api_pool {

least_conn;

server api-a:8080 max_fails=2 fail_timeout=5s;

server api-b:8080 max_fails=2 fail_timeout=5s;

}

server {

location / {

proxy_pass http://api_pool;

proxy_next_upstream error timeout http_502 http_503;

}

}What It Solves

- Spreads traffic across replicas so no single instance becomes the hot spot by default.

- Removes failed or draining nodes from rotation before users discover them first.

- Supports rolling deploys and zonal failover without changing client configuration.

Failure Modes

- Using round robin for workloads dominated by sticky or long-lived connections.

- Treating a TCP accept as health while the application is already degraded behind it.

- Ignoring cross-zone data cost and latency when traffic sloshes between regions.

Production Checklist

- Use health checks that reflect application readiness, not just port liveness.

- Drain connections explicitly during deploys and autoscaling events.

- Measure load by concurrency and tail latency, not only request count.

Closing

A load balancer is only as smart as its health model. If it cannot see degradation, it will distribute failure efficiently.