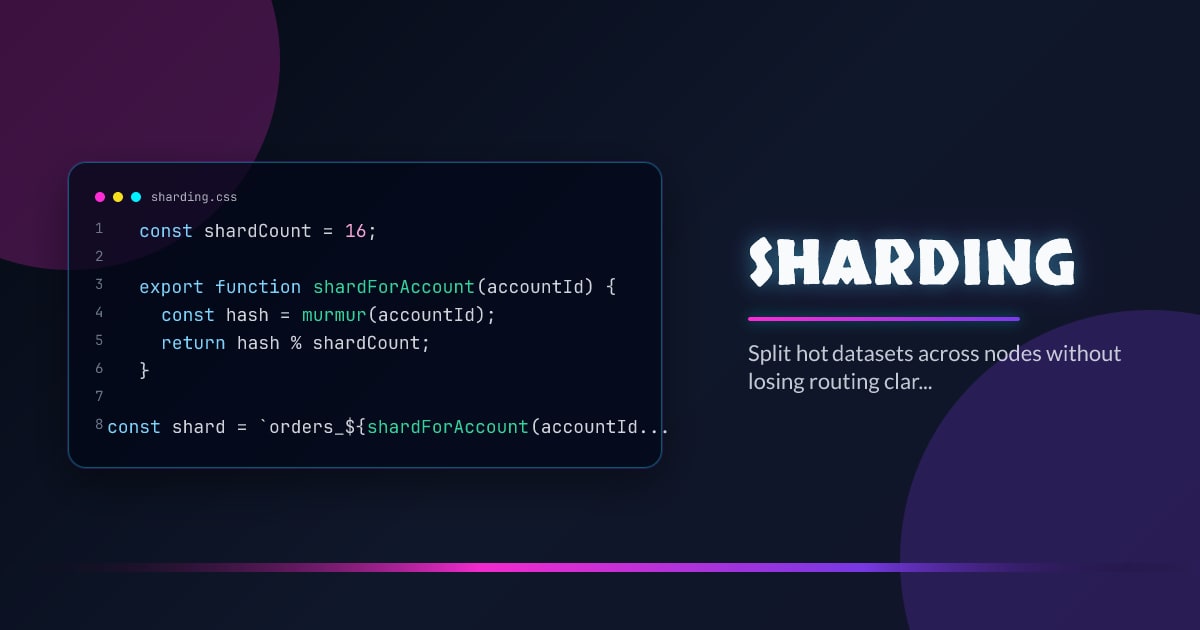

Sharding

Sharding partitions one logical dataset across several physical nodes so you can scale beyond the write or storage limits of a single machine. The hard part is never the modulo operation; it is routing, rebalancing, and operational recovery. Pick a shard key by workload, not by aesthetics. A beautiful key that creates hotspots is still a bad key.

Minimal Example

const shardCount = 16;

export function shardForAccount(accountId) {

const hash = murmur(accountId);

return hash % shardCount;

}

const shard = `orders_${shardForAccount(accountId)}`;What It Solves

- Distributes write and storage load across many nodes instead of one vertical bottleneck.

- Lets you isolate tenants or key ranges when one part of the dataset grows faster than the rest.

- Creates a path to incremental scaling when replication alone is no longer enough.

Failure Modes

- Choosing a shard key with low cardinality or obvious hotspots, such as region or timestamp alone.

- Building cross-shard joins and transactions into the critical path without budgeting for them.

- Treating resharding as a future problem even though every shard scheme eventually meets it.

Production Checklist

- Model read and write distribution before locking the shard key into public contracts.

- Separate routing logic from business logic so rebalancing is manageable.

- Plan migration and rebalancing tooling before the first shard is full.

Closing

Sharding is an operations decision expressed in data layout. If the routing and rebalance story is weak, the scale story is weak too.